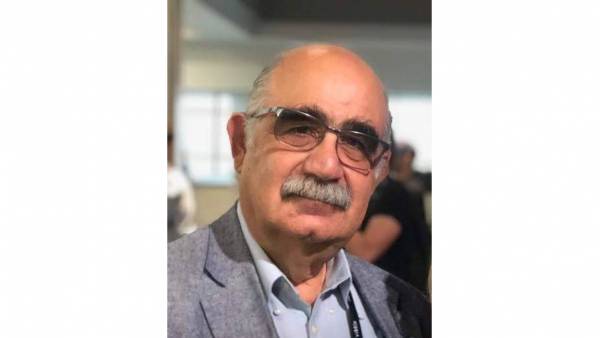

Latin America. Radio Televisión Española, RTVE, and VSN presented the progress of the project they are jointly carrying out to improve the automatic metadata of content from the Corporation's Archive through the use of Artificial Intelligence.

With the aim of exploring the possibilities of these advances in its archive, RTVE put out to public tender a project consisting of automatic metadata using Artificial Intelligence of 11,000 hours of content from its Archive, among which material produced by TVE in the 60s and 70s stand out.

Thanks to the integration with the main Artificial Intelligence engines that allows its media management platform, VSNExplorer MAM, added to the possibility of providing a cloud service with this technology, VSN decided to present its candidacy for this project. In this case, the company presented its proposal working with the engine of the company Etiqmedia. During the tender, VSN obtained the best valuation combining the technical and economic aspects, which made it possible to obtain the award.

Artificial Intelligence at the service of metadata

The project has two phases: a test phase, which has been developed during the last four months, and the definitive implementation of the service, which began in October and will last one year. During the first, the systems have been installed and the equipment and workflows between RTVE and VSN have been established. In these first steps, 160 hours of video and audio have been processed satisfactorily.

During the process, documents from the RTVE archive are ingested into the VSNExplorer MAM platform. These include a media file and an XML document with information about the content, which is reflected in the created asset. As soon as this content enters the system, the automatic metadata process is launched with the Artificial Intelligence engine, which shows us all the information extracted in a single centralized interface.

In terms of audio, this technology is able to extract in a few minutes a total transcription in text, its capitalization and accentuation, the recognition of the people, places, events, products, organizations and dates that are mentioned, as well as keywords and an automatic classification of the content. In video, machine vision is able to perform facial recognition, identify and catalog the scene, along with the objects, labels and signs that appear in the images.

All this information is available in the web interface of VSNExplorer MAM to perform a quality control on it. In this way, we can consult and edit the results obtained so that they adjust to the desired cataloging parameters in a simple and fast way. For example, you can correct the audio transcription or introduce characters that artificial intelligence has overlooked. Once this correction is made, the system calculates the WER (Word Error Rate), that is, the ratio of errors committed in the transcription. During this process, it compares the segments of the transcription obtained by the AI engine and the review performed by the documentalist to obtain the result.

After the process is complete, VSNExplorer MAM creates several XML files with all this information that are sent back to the RTVE File. In this way, the assets of the public chain incorporate metadata after the process that allows a complete cataloging, facilitating its search and recovery for RTVE users.

This project is one more in the list of collaborations made between VSN and RTVE. Recently, VSNExplorer MAM was the system chosen for Hub Innovation, a pilot initiative aimed at studying the news generation process exclusively with cloud tools for small news production centers.

Leave your comment