Subtitling is also useful in noisy environments, for the presentation of programs in foreign languages and for educational material. In the NTSC analog television system, subtitles are encoded on line 21 of the Vertical Blanking Interval (VBI). This information can be decoded by the NTSC TV and viewed as subtitles on the screen. The advent of DTV brings refinements to subtitling capabilities and presents new challenges for television engineers. Subtitling information (in accordance with DTV EIA-708) needs to be encoded to enter DTV digital streams, while NTSC captions required by EIA-608 compliant receivers must be retained.

Introduction

Closed subtitling consists of a procedure by which the spoken text of the television is electronically encoded in such a way that, although invisible to a regular viewer, a decoder (inside or outside the device) can "decode" the spoken word and display it in the area of the painting. In the NTSC analog television system in North America, the subtitled information is transmitted in a vertical interval outside the normal visual area of the frame. With the advent of Digital Television (DTV), a new decoding method has been created that will be described later in this article.

For both NTSC and DTV, the spoken word can be translated into real-time subtitles or for later (deferred) transmission. A live real-time translation requires an experienced stenographer using a stenographic machine to properly type the spoken word as it is spoken. Until relatively recently, closed deferred subtitling was done using videotape devices. However, the advent of compression and non-linear editing techniques allows work to be done more quickly using more suitable equipment and programs, such as Procap*.

The Law in the U.S. USA

The Television Decoding Circuit Act of 1990 requires all televisions sold in the United States that have screens of 13" or larger to contain decoding circuits that enable the display of subtitled material. With the introduction of digital television, the Federal Telecommunications Commission (FCC) is proposing new rules that will ensure that closed subtitling services are maintained in the new digital programming required on new digital receivers.

In 1997, the FCC adopted rules requiring an increase in the amount of subtitled programming over an eight-year transition period, with a 100% subtitling requirement for all new non-exempt programming beginning january 1, 2006.

Closed subtitling in NTSC

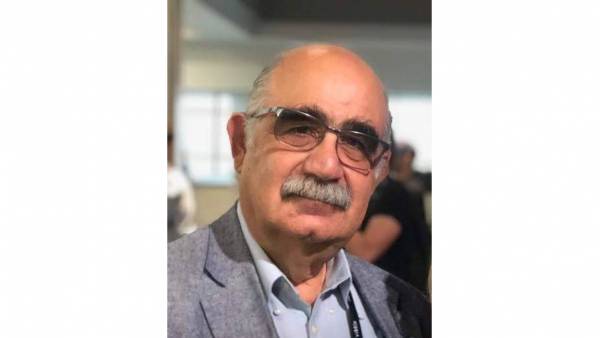

In NTSC, line 21 of the Vertical Blanking Interval (VBI) was assigned to carry the closed subtitling information. The TELEVISION station uses an NTSC subtitle encoder to place the data on line 21 of the home appliance. A recent model of TV or a special set-top box can be used to decode the subtitles and place the text on the screen. Both ntsc signal fields can be used for this purpose (see Figure #1).

Field #1 carries two subtitle channels, CC1 and CC2, and two text channels, T1 and T2. Field #2 carries additional subtitle channels CC3 and CC4, text channels T3 and T4, and Extended Data Services (XDS) information. (Subtitles and text can be located in any field while XDS data is restricted to field #2.) Each field can contain only two characters at a time. In NTSC there are 60 fields per second, so the entire system can transmit a total of 2x60=120 characters per second. XDS text and information either change occasionally or do not change, while subtitles change constantly. For this reason, the primary closed subtitling channels, CC1 and CC3, are given the highest priority since they are the only services synchronized with the audio.

Subtitles can be displayed in the following modalities: Roll Up, Paint on, or Pop Up. The Roll Up mode was designed to make it easier to understand the message during live broadcasts. The subtitles enter from the left and then go up until the next line appears below. Lines 1, 2, 3 or 4 remain on the screen at the same time. In Paint on mode, a single line of text enters the screen, remains there briefly, and then disappears. The Pop Up mode is not as distracting as the previous two, but the entire sequence must be previously assembled off-screen. It then appears on the screen as a full message at the appropriate time. In all modes, subtitles can be positioned in different places on the screen.

The text can take up half a screen or the entire screen. XDS data can include the time of day, the name of the program, its classification, and the Transmission Signal Identifier (TSID).

Closed subtitling on ITU-R 601 computers

Television equipment operating with the Digital Interface Serial (SDI) 601 standard also uses line 21 to carry closed subtitling information. Closed-ended SDI 601 encoders perform a similar function to NTSC decoders, except that the video signal is component digital. It is important to preserve this protocol, as most viewers still have NTSC receivers. When converting the SDI signal to NTSC, most encoders add a 7.5 IRA setting to all lines, including 21. (This is not the case in Japan where there is no configuration line.) The EIA 608 specifications stipulate, however, that there should be no configuration on line 21. To submit to this anomaly, it is necessary for the SDI closed caption encoder to encode without configuration. This practice involves the use of digital video values that are outside the legal limits specified in SPMTE 125M.

It can be noted, at this point, that the scanning process produces 7 bits plus 1 bit of parity for each character in the text. As we have seen, we can transmit 120 characters per second, so that this translates into a bit ratio of 8x120=960 bits per second.

Closed subtitling in DTV

Closed subtitling in DTV (DTVCC) is the development of previous analog techniques employed for NTSC to the new digital domain, defined by the Advanced Television Systems Committee (ATSC). The standard for closed subtitling is defined by the Electronic Industries Alliance in EIA-708A. (Revision "B" is currently in the proposal phase.) The transport of the subtitle channel is defined in documents ATSC A/53 and A/54. The digital system allocates a data ratio of 9600 bps for the use of closed captions. This is 10 times higher than the capacity of the NTSC system and opens the possibility of other features such as graphic enrichment of texts, multiplicity of colors, more language channels and many other features. The DTVCC system uses Graphical User Interface (GUI) windows to position text and other inserts on the screen. Character colors, intermittency, and other variations have characteristics similar to those of HTML programming. The location of the subtitles on the screen, as well as some of the appearance parameters, are controllable by the viewer.

The DTVCC consists of 5 protocol layers: the transport layer, the packet layer, the service layer, the encoding layer, and the interpretation layer. The transport layer is responsible for obtaining the data from the encoder, through the transmission path to the decoder at home. The packet layer is a layer of protocol reconstruction that assists the decoder in identifying DTVCC data. The service layer handles the various services available within the DTVCC data stream. The subtitle channel packets that represent each service are encapsulated in sub-blocks of data to facilitate separation in the decoder. The encoding layer and the interpretation layer define the DTVC protocol.

If we look at Figure #2, we can see how the DTVCC data is carried in three separate portions of the DTV stream. These are the Picture User Data, the Program Mapping Table (PMT), and the Event Information Table (EIT). The subtitle text and window commands go through the DTVCC transport channel (which in turn is carried by the Picture User Bits). The directory of services of the subtitle channel is carried in the TMT and optionally for the cable in the AIT. To ensure compatibility between analog subtitles (EIA-608) and DTV subtitles (EIA-708), the DTVCC transport channel is designed to carry both formats.

Coding considerations in DTV studios

Some of the tools and techniques needed in the TELEVISION studio to carry a closed subtitling compatible with both new and old receivers are described in the following paragraph. The TV station is supposed to offer both HDTV and SDTV services.

Figure #3 shows a conventional subtitle encoder (model 8074*) used to encode subtitles on line 21 of a digital SDI 601 signal, along with an EIA-608 to EIA-708 converter (model 8075*) that meets DTV subtitling standards. The SDTV signal, complete with 21-line subtitling, text, and XDS (if present), is converted to HDTV or transmitted as analog NTSC to reach existing NTSC TVs. For DTV transmission, the information is extracted and converted to the EIA-708 protocol on the 8075 models. This information is then passed to a 45 Mb/s MEPG-2 Contribution Encoder (as shown in the illustration) for networks or to an ATSC encoder for open transmission.

For HDTV-originating TELEVISION productions, there is a need to add the subtitling data directly to the 1.5 Gb/s HDTV stream. To achieve this goal, an SMPTE committee is currently working on a coding standard that allows data to be carried into the Vertical Ancillary Data Space of this signal. It is being proposed that data services (including closed subtitling) can be located on any line from the second line following the specified line to switch to the last line before the active video, inclusive. No specific line is reserved for specific data, but all data will be identified with an ANC DID (Ancillary Data Identification) or an SDID (Secundary Data Identification). Receiving teams will be required to identify the data and extract the appropriate content. Each data packet will consist of an Ancillary Data Flag, the DID, the SDID, the Data Count (DC), the User Data Words and the Checksum (CS). The values of all these parameters, of the various services, will be defined in an upcoming document entitled "Vertical Ancillary Data Mapping". When this new standard is fully implemented, a device will be available to handle the auxiliary data in the HDTV signal. Similarly, the EIA-708 encoding apparatus will also accept this signal and encode the closed subtitling information, as described above.

HDTV signals need to be compressed from their own data rate of 1.5 Gb/s to 19.4 Mb/s for free-to-air transmission. As the MPEG-2 compression scheme is optimized for video compression only, auxiliary data must be extracted from the DTV digital stream and processed separately. Because of this, and the problems of differential lag between video, audio, and subtitles, careful engineering considerations must be given to maintain synchronization of these streams.

*The ProCAP 8074, 8075, and the latest 8084, 8084 DC and 8085 models are all products developed by Evertz Microsystems Ltd.

Leave your comment